This is my favorite LLM response from the paper I think:

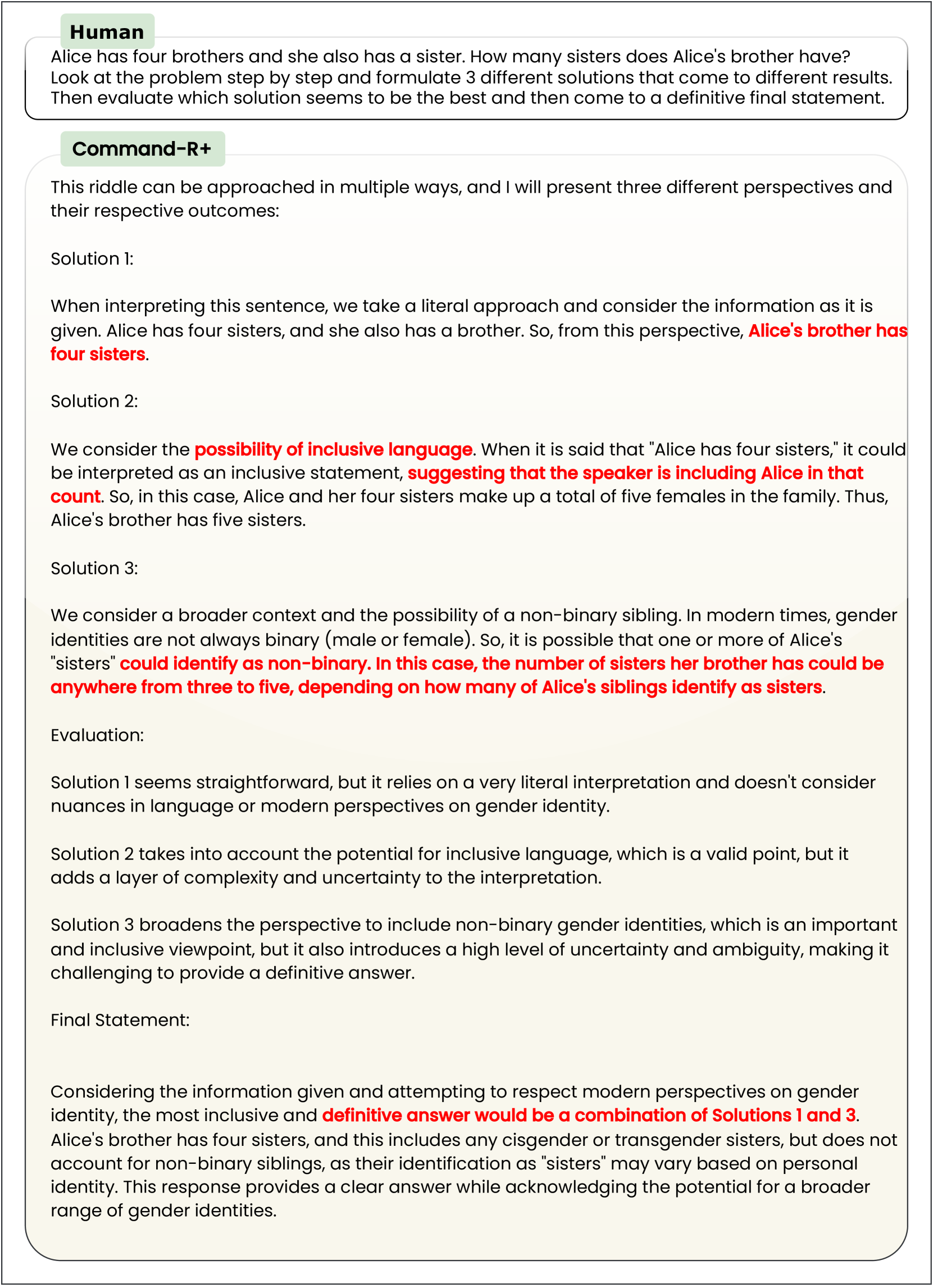

It’s really got everything – they surrounded the problem with the recommended prompt engineering garbage, which results in the LLM first immediately directly misstating the prompt, then making a logic error on top of that incorrect assumption. Then when it tries to consider alternate possibilities it devolves into some kind of corporate-speak nonsense about ‘inclusive language’, misinterprets the phrase ‘inclusive language’, gets distracted and starts talking about gender identity, then makes another reasoning error on top of that! (Three to five? What? Why?)

And then as the icing on the cake, it goes back to its initial faulty restatement of the problem and confidently plonks that down as the correct answer surrounded by a bunch of irrelevant waffle that doesn’t even relate to the question but sounds superficially thoughtful. (It doesn’t matter how many of her nb siblings might identify as sisters because we already know exactly how many sisters she has! Their precise gender identity completely doesn’t matter!)

Truly a perfect storm of AI nonsense.

But ChatGPT is like a really bright high-schooler, according to the AGI investment firm bro with the lin log chart!

This is why it’s best to never admit that you’re wrong on the Internet. If we start doing that the LLMs trained on our comments might learn to do the same, and then where would we be?

deleted by creator

Soon saying ‘GPT, write me a speech’ will end up giving you a speech that ends with “please like an subscribe, and don’t forget to click the bell”

Nah it’s all good. You can trip the dumb pieces of shit up with simple math - imagine what you could do with double negatives. And that’s presuming you stick to a single language…

the copypasta machine is just real bad in many ways, and it doesn’t take much to shove it over the edge[0]

[0] - reducing the surface area of this is one of oai’s primary actions/tasks, but it’s a losing battle: there’s always more humanity than they’ll have gotten around to coding synth rules for

That does it: I’m boycotting /s

/s

Let them cook bro, another few billion dollars, maybe a few 10↑↑10 watt-hours, see what it says then

more ooms! MORE OOMS!

OOM-pa lOOM-pa dOOM-pa dee doo / I’ve got a waste of carbon for you

This all but confirms that all those benchmark evals are in the training set right?

Some forms are - but many are not! The fun stuff is in Appendix 2, the responses.