Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. A lot of people didn’t survive January, but at least we did. This also ended up going up on my account’s cake day, too, so that’s cool.)

“Amazon plunges 9%, continues Big Tech’s $1 trillion wipeout as AI bubble fears ignite sell-off”

https://www.cnbc.com/2026/02/06/ai-sell-off-stocks-amazon-oracle.html

What a shame

https://www.nytimes.com/2026/01/10/world/europe/icc-judges-us-sanctions-trump.html

https://www.axios.com/2026/01/20/amazon-prices-trump-tariffs-andy-jassy-davos

https://www.nytimes.com/2026/01/28/business/media/amazon-melania-trump-film-critics.html

https://www.cnn.com/2026/02/01/business/melania-box-office-amazon

https://www.cnbc.com/2026/01/20/amazon-jassy-trump-tariffs-prices-shoppers.html

https://www.washingtonpost.com/politics/2025/11/03/trump-ballroom-donors-contracts-enforcement/

https://thehill.com/homenews/campaign/4962902-billionaires-2024-election-bezos-musk/

“Fund a Fascist and Find Out”

https://www.cnn.com/2025/04/09/tech/tech-leaders-supported-trump-lost-money-dghttps://www.commondreams.org/news/amazon-tax-breaks

https://www.cnbc.com/2025/04/23/trump-inauguration-donors-include-meta-amazon-target-delta-ford.html

https://www.wsj.com/business/trumps-tax-law-sharply-cuts-amazons-corporate-tax-bill-ee94ac24

Boycott these too

https://www.sfchronicle.com/bayarea/article/apple-google-trump-white-house-ballroom-donors-21118578.php

Tangentially on topic:

Just finished The Regicide Report by friend of the instance Charles Stross. Hell of a finish to the main series! I’ll likely start a re-read of the whole series soon, and I’m hopeful that it’ll win all the awards.

Had a couple of shower thoughts afterward:

-

In the previous novel, a bunch of American computer bois with brainworms concocted a plan to disassemble the moon and turn it into orbital datacenters, which is lol

-

Ghislaine Maxwell is the Iris Carpenter of pedos.

-

Keeping speculative fiction ahead of current events must be exhausting.

@o7___o7 @techtakes That’s why I’m fleeing screaming back to the arms of far-future space opera ATM.

deleted by creator

I reread the last couple of ‘main line’ laundry files books before I dived into the latest. The complete capture of the US government by The Sleeper’s minions tracks what’s actually happened since in the US I had a panic attack and needed a day or two to calm down.

Same thing happening in the UK now. I can t think of anywhere safe to hide.I hear ya. I mean, Stross’s George Clooney stood and fought the horrors, while ours ran away to France. (The book really captured the unique American flavor of High Weirdness and the stickiness of Amerca’s belief in its own mythos, didnt it though?)

In the here and now, I try to remind myself that they’re the ones who suck and that they should hide.

When Woke 2 comes we’re going to be insuffferable.

-

The headline alone is worthy of upvoting. About halfway through the article, the author includes an embedded YouTube video of the Dilberito Flash game. Made me reflect that 20 years ago, they might simply have directly embedded the game itself. And contemplate what the Web might look like if/when external YouTube embedding craps out.

And goddamn:

his former syndicate, publisher, and professional organizations have all declined to pay tribute or even acknowledge his passing.

I didn’t realize it was quite that harsh, but so it goes. Play stupid games, win stupid prizes.

Re datacenters in space:

Multiple hackernews insist that SpaceX must have discovered new physics that solves orbital heat management, because otherwise Musk and the stockholders are dumb.

https://news.ycombinator.com/item?id=46862222

Edit: may have gotten the ol URL switcharoo:

https://news.ycombinator.com/item?id=46862170

Current top comment is nice (https://news.ycombinator.com/item?id=46862435):

it is possible to put 500 to 1000 TW/year of AI satellites into deep space, meaningfully ascend the Kardashev scale and harness a non-trivial percentage of the Sun’s power

We currently make around 1 TW of photovoltaic cells per year, globally. The proposal here is to launch that much to space every 9 hours, complete with attached computers, continuously, from the moon.

edit: Also, this would capture a very trivial percentage of the Sun’s power. A few trillionths per year.

SpaceX must have discovered new physics that solves orbital heat management, because otherwise Musk and the stockholders are dumb.

Truly a conundrum worthy of the XXI century

Very much “sweaty guy hovering over two buttons”

Multiple hackernews insist that SpaceX must have discovered new physics that solves orbital heat management, because otherwise Musk and the stockholders are dumb.

The leaps in logic are so idiotic “he managed to land a rocket up right, so maybe he can pull it off!” (as if Elon personally made that happen, or as if a engineering challenge and fundamental thermodynamic limits are equally solvable). This is despite multiple comments replying with back of the envelope calcs on energy generation and heat dissipation of the ISS and comparing it to what you would need for even a moderately sized data center. Or even the comments that are like “maybe there is a chance”, as if it is wiser to express uncertainty…

1,604 comments jfc

It seems that Anthropic has vibe coded a C compiler. This one is really good! The generated code is not very efficient. Even with all optimizations enabled, it outputs less efficient code than GCC with all optimizations disabled.

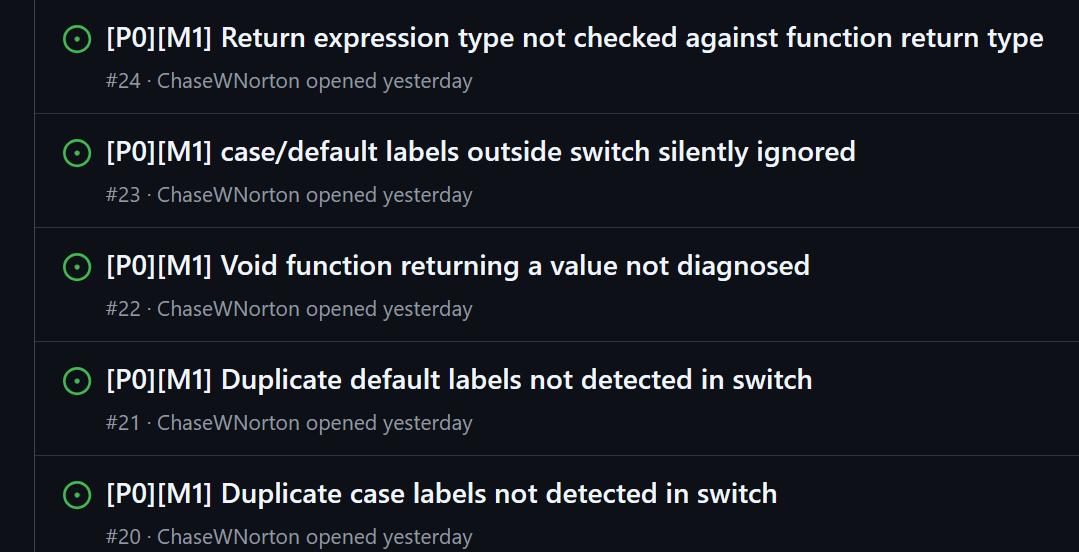

The first issue filed is called “Hello world does not compile” so you can tell it’s off to a good start. Then the rest of the six pages of issues appear to be mostly spam filed by some AI guy’s rogue chatbot.

I knew the Anthropic blog post was bullshit but every single time the reality is 10x worse that I anticipated.

@lagrangeinterpolator

That’s beginning to look like a metric…

The first issue filed is called “Hello world does not compile” so you can tell it’s off to a good start.

The comments are a hoot, at least.

@sailor_sega_saturn @dovel the nice thing about rogue chatbots is that there’s no difference between that and a non-rogue chatbot

I wonder what actual experts in compilers think of this. There were some similar claims about vibe coding a browser from scratch that turned out to be a little overheated: https://pivot-to-ai.com/2026/01/27/cursor-lies-about-vibe-coding-a-web-browser-with-ai/

I do not believe that this demonstrates anything other than they kept making the AI brute force random shit until it happened to pass all the test cases. The only innovation was that they spent even more money than before. Also, it certainly doesn’t help that GCC is open source, and they have almost certainly trained the model on the GCC source code (which the model can regurgitate poorly into Rust). Hell, even their blog post talks about how half their shit doesn’t work and just calls GCC instead!

It lacks the 16-bit x86 compiler that is necessary to boot Linux out of real mode. For this, it calls out to GCC (the x86_32 and x86_64 compilers are its own).

It does not have its own assembler and linker; these are the very last bits that Claude started automating and are still somewhat buggy. The demo video was produced with a GCC assembler and linker.

I wonder why this blog post was brazen enough to talk about these problems. Perhaps by throwing in a little humility, they can make the hype pill that much easier to swallow.

Sidenote: Rust seems to be the language of choice for a lot of these vibe coded “projects”, perhaps because they don’t want people immediately accusing them of plagiarism. But Rust syntax still reasonably follows languages like C. In most cases, blindly translating C code into Rust kinda works. Now, Rust does have the borrow checker which requires a lot of thinking to deal with, but I think this is not actually a disadvantage for the AI. Borrow checking is enforced by the compiler, so if you screw up in that department, your code won’t even compile. This is great for an AI that is just brute forcing random shit until it “works”.

I only sampled some of the docs and interesting-sounding modules. I did not carefully read anything.

First, the user-facing structure. The compiler is far too configurable; it has lots of options that surely haven’t been tested in combination. The idea of a pipeline is enticing but it’s not actually user-programmable. File headers are guessed using a combination of magic numbers and file extensions. The dog is wagged in the design decisions, which might be fair; anybody writing a new C compiler has to contend with old C code.

Next, I cannot state enough how generated the internals are. Every hunk of code tastes bland; even when it does things correctly and in a way which resembles a healthy style, the intent seems to be lacking. At best, I might say that the intent is cargo-culted from existing code without a deeper theory; more on that in a moment. Consider these two hunks. The first is generated code from my fork of META II:

while i < len(self.s) and self.clsWhitespace(ord(self.s[i])): i += 1And the second is generated code from their C compiler:

while self.pos < self.input.len() && self.input[self.pos].is_ascii_whitespace() { self.pos += 1; }In general, the lexer looks generated, but in all seriousness, lexers might be too simple to fuck up relative to our collective understanding of what they do. There’s also a lot of code which is block-copied from one place to another within a single file, in lists of options or lists of identifiers or lists of operators, and Transformers are known to be good at that sort of copying.

The backend’s layering is really bad. There’s too much optimization during lowering and assembly. Additionally, there’s not enough optimization in the high-level IR. The result is enormous amounts of spaghetti. There’s a standard algorithm for new backends, NOLTIS, which is based on building mosaics from a collection of low-level tiles; there’s no indication that the assembler uses it.

The biggest issue is that the codebase is big. The second-biggest issue is that it doesn’t have a Naur-style theory underlying it. A Naur theory is how humans conceptualize the codebase. We care about not only what it does but why it does. The docs are reasonably-accurate descriptions of what’s in each Rust module, as if they were documents to summarize, but struggle to show why certain algorithms were chosen.

Choice sneer, credit to the late Jessica Walter for the intended reading: It’s one topological sort, implemented here. What could it cost? Ten lines?

I do not believe that this demonstrates anything other than they kept making the AI brute force random shit until it happened to pass all the test cases.

That’s the secret: any generative tool which adapts to feedback can do that. Previously, on Lobsters, I linked to a 2006/2007 paper which I’ve used for generating code; it directly uses a random number generator to make programs and also disassembles programs into gene-like snippets which can be recombined with a genetic algorithm. The LLM is a distraction and people only prefer it for the ELIZA Effect; they want that explanation and Naur-style theorizing.

This could be its own post. Very nice!

There’s a standard algorithm for new backends, NOLTIS

I think this makes it sound more cutting-edge and thus less scathing than it should, it’s an algorithm from 2008 and is used by LLVM. Claude not only trained on the paper but on all of LLVM as well.

It’s one topological sort, implemented here. What could it cost? Ten lines?

This one idk, some of it could be more concise but it also has to build the graph first using that weird seemingly custom hashmap as the source. This function, however, is immensely funny

I wonder why this blog post was brazen enough to talk about these problems. Perhaps by throwing in a little humility, they can make the hype pill that much easier to swallow.

I feel this is an artefact of the near complete collapse of mainstream journalism, combined with modern tech business practises that are about securing investment and cashing out, and every other concern is secondary or even entirely absent. It’s all just selling vibes.

People only ever report the hype, the investors see everyone else following the hype and panic that they might be left out and bury you in cash. When it all turns sour and people ask pointed questions about the exact nature of the magic beans you were promising to grow, you can just point at the blog post that no-one read (or at least, only poor people read, and they’re barely people if you think about it) and point out that you never hid anything.

I don’t even think many AI developers realize that we’re in a hype bubble. From what I see, they genuinely believe that the Models Will Improve and that These Issues Will Get Fixed. (I see a lot of faculty in my department who still have these beliefs.)

What these people do see, however, are a lot of haters who just cannot accept this wonderful new technology for some reason. AI is so magical that they don’t need to listen to the criticisms; surely they’re trivial by comparison to magic, and whatever they are, These Issues Will Get Fixed. But lately they have realized that with the constant embarrassing AI failures (AI surely doesn’t have horrible ethics as well), there are a lot of haters who will swarm the announcement of any AI project now. The haters also tend to be people who actually know stuff and check things (tech journalists are incentivized to not do that), but it doesn’t matter because they’re just random internet commenters, not big news outlets.

My theory is that now they add a ton of caveats and disclaimers to their announcements in a vain attempt to reduce the backlash. Also if you criticize them, it’s actually your fault that it doesn’t work. It’s Still Early Days. These Issues Will Get Fixed.

the Models Will Improve

I tell people that this is code for RAM and storage will cost 10x by this time next year when this comes up. Highly recommended.

We’re Still Early never dies

I wonder what actual experts in compilers think of this.

Anthropic doesn’t pay me and I’m not going to look over their pile of garbage for free, but just looking at the structure and READMEs it looks like a reasonable submission for an advanced student in a compiler’s course: lowering to IR, SSA representation, dominators, phi elimination, some passes like strength reduction. The register allocator is very bad though, I’d expect at least something based on colouring.

The READMEs are also really annoying to read. They are overlong and they don’t really explain what is going on in the module. There’s no high-level overview of the architecture of the compiler. A lot of it is just redundant. Like, what is this:

Ye dude, of course it doesn’t depend on the IR, because this is before IR is constructed. Are you just pretending to know how a compiler works? Wait, right, you are, you’re a bot. The last sentence is also hilarious, my brother in christ, what, why is this in the README.

Now this evaluation only makes sense if the compiler actually works - which it doesn’t. Looking at the filed issues there are glaring disqualifying problems (#177, #172, #171, #167, etc. etc. etc.). Like, those are not “oops, forgot something”, those are “the code responsible for this is broken”. Some of them look truly baffling, like how do you manage to get so many issues of the type “silently does something unexpected on error” when the code is IN RUST, which is explicitly designed to make those errors as hard as possible? Like I’m sorry, but the ones below? These are just “you did not even attempt to fulfill the assignment”.

It’s also not tested, it has no integration tests (even though the README says it does), which is plain unacceptable. And the unit tests that are there fail so lol, lmao.

It’s worse than existing industry compilers and it doesn’t offer anything interesting in terms of the implementation. If you’re introducing your own IR and passes you have to have a good enough reason to not just target LLVM. Cranelift is… not great, but they at least have interesting design choices and offer quick unoptimized compilation. This? The only reason you’d write this is you were indeed a student learning compilers, in which case it’d be a very good experience. You’d probably learn why testing is important for the rest of your life at least.

I wonder if this is going to hold out long enough to get some obnoxious AI-first language created that is designed to have as obnoxiously picky of a compiler as it can in order to try and turn runtime errors that the model can’t cope with into compile failures which it can silently retry until they’re ‘fixed’

The fact it doesn’t have an assembler or linker, and I am doubting it implemented its own lexical analyzer, I almost struggle to call this a compiler.

The claim it is from scratch is misleading since it has all prior training from open source.

Building a small compiler for a simple language (C is pretty simple, especially older versions) is a common learning exercise and not difficult. This is very much another situation where “AI” created an over simplified version of something with hidden details on how it got there as a way to further push the propaganda that it is so capable.

Waiting for some promptfondler to complain that this kind of assignment is not really fair for an AI because it has actual requirements.

This could be regarded as a neat fun hack, if it wasn’t built by appropriating the entire world of open source software while also destroying the planet with obscene energy and resource consumption.

And not only do they do all that… it’s also presented by those who wish this to be the future of all software. But for that, a “neat fun hack” just isn’t enough.

Can LLMs produce software that kinda works? Sure, that’s not new. Just like LLMs can generate books with correct grammar inside, and vaguely about a given theme. But is such a book worth reading? No. And is this compiler worth using? Also no.

(And btw, this approach only works with an existing good compiler as “oracle”. So forget about doing that to create a new compiler for a new language. In addition, there’s certainly no other language with as many compilers as C, providing plenty of material for the training set.)

This could be regarded as a neat fun hack, if it wasn’t built by appropriating the entire world of open source software

This shouldn’t be left merely implied, the autoplag trained on GCC, clang, and every single poor undergrad who had to slap together a working C compiler for their compilers course and uploaded it to github, and “learnt” fuckall

did you have a point you wanted to make?

There seem to be a few people here now who just dump unrelated links without any explanation. (See also the one who just posted a dozen, which all seem to mention Trump). No idea what they are trying to do.

this, yeah

not everybody heard of gas town within first two weeks

OT: paying the cat tax…again. Please ignore the ash on Hector’s head, its an ongoing mystery where thats been coming from.

You can basically tell their personalities from the photo.

once again, the facade of the “whoops, bad company” falls to the ground the moment she needs her hands to fill the pompoms instead of hold up the venetian mask

transcript

a quote tweet by @XiWellWisher, reads: “So what’s the deal with this ghastly woman again? She’s a sort of silicon valley Ghislaine Maxwell?”

the quoted tweet by aella reads: “There’s apparently a pro-billionaire protest in SF on the 7th. I might go to this to support! Anybody else going?”

also, real weird account name on that account, wonder if it’s a sock

robin hanson blocked me for referring to him as aella with tenure. now i think that he’s ghislaine maxwell with tenure

“pro-billionaire” sorry I just threw up in my mouth a little. Also the billionaires are the ones rushing to create the thing so many rationalists claim is an existential risk, why the fuck would you support them??

Yeah, @XiWellWisher going up against Aella on X, The Everything App is well into late-SNL stages of unfunny parody

i don’t find that name too strange, it’s a post-ironic Online Leftist shibboleth

Bruh

I see that Silicon Valley has transcended AGI technology* and can now execute NP-complete** problems.

* A Guy in India

** Nationals from the Philippines, CompletelyWAYMO exec admits under oath cars in the US have “human operators” based in Philippines

https://www.youtube.com/watch?v=ClPDbwql34oRyan Mac:

Epstein had many known connections to Silicon Valley CEOs, but less known was how he made money from those relationships.

We did a deep dive into how he got dealflow in Silicon Valley, giving him shots to invest in Coinbase, Palantir, SpaceX and other companies.

For example, here is Coinbase cofounder Fred Ehrsam in 2014 emailing w/ people around Epstein, including crypto entrepreneur Brock Pierce, asking to meet Epstein before the financier invested $3m in Coinbase.

Coinbase was a two year old startup. Epstein netted multimillion dollar returns from this.

Here is Epstein asking Peter Thiel if he should invest in Spotify or Palantir. Thiel was (and still is) Palantir’s chairman and tells Epstein there is “no need to rush.” This is one of several emails where Thiel gives Epstein advice.

Epstein later invested $40m into one of Thiel’s VC funds.

One of @ering.bsky.social’s great file finds: Epstein tried to help create an tech fund shortly before he was arrested in 2019 with two tech types. One of his partners, however, was worried about the “optics” of telling founders that Epstein was involved.

So they suggested Epstein conceal himself.

At the end of his life, Epstein had assets of around $600m. A large part of that was due to his ability to get in early to hot tech deals. The returns he made off those deals helped fund his lifestyle.

[…]

While reporting this, I had something happen that’s never happened. A comms rep for one of the co’s disputed my reporting and said what I was telling them was untrue because it was not in Grok, xAI’s chatbot.

I was looking directly at the files. And this person was using AI to challenge the truth.

https://bsky.app/profile/rmac.bsky.social/post/3me4wmrgic226

I was looking directly at the files. And this person was using AI to challenge the truth.

These are the people who come next election will be voting strictly according to an AI’s say so.

The common clay of the new west:

transcription

Twitter post from @BenjaminDEKR

“OpenClaw is interesting, but will also drain your wallet if you aren’t careful. Last night around midnight I loaded my Anthropic API account with $20, then went to bed. When I woke up, my Anthropic balance was $O. Opus was checking “is it daytime yet?” every 30 minutes, paying $0.75 each time to conclude “no, it’s still night.” Doing literally nothing, OpenClaw spent the entire balance. How? The “Heartbeat” cron job, even though literally the only thing I had going was one silly reminder, (“remind me tomorrow to get milk”)”

Continuation of twitter post

“1. Sent ~120,000 tokens of context to Opus 4.5 2. Opus read HEARTBEAT md, thought about reminders 3. Replied “HEARTBEAT_OK” 4. Cost: ~$0.75 per heartbeat (cache writes) The damage:

- Overnight = ~25+ heartbeats

- 25 × $0.75 = ~$18.75 just from heartbeats alone

- Plus regular conversation = ~$20 total The absurdity: Opus was essentially checking “is it daytime yet?” every 30 minutes, paying $0.75 each time to conclude “no, it’s still night.” The problem is:

- Heartbeat uses Opus (most expensive model) for a trivial check

- Sends the entire conversation context (~120k tokens) each time

- Runs every 30 minutes regardless of whether anything needs checking That’s $750 a month if this runs, to occasionally remind me stuff? Yeah, no. Not great.”

There are other posts of the same story that include the original “dev” learning his lesson by using a cheaper model instead of just using a clock.

https://bsky.app/profile/rusty.todayintabs.com/post/3mdrdn3uu7226

There’s also a hackernews which is interesting : https://news.ycombinator.com/item?id=46854150

Stupid stuff openclaw did for me:

- Created its own github account, then proceeded to get itself banned (I have no idea what it did, all it said was it created some new repos and opened issues, clearly it must’ve done a bit more than that to get banned)

- Signed up for a Gmail account using a pay as you go sim in an old android handset connected with ADB for sms reading, and again proceeded to get itself banned by hammering the crap out of the docs api

- Used approx $2k worth of Kimi tokens (Thankfully temporarily free on opencode) in the space of approx 48hrs.

Unless you can budget $1k a week, this thing is next to useless. Once these free offers end on models a lot of people will stop using it, it’s obscene how many tokens it burns through, like monumentally stupid. A simple single request is over 250k chars every single time. That’s not sustainable.

I hadn’t realised quite how terrible the basic offering was. I guess every reinvented-cron-but-unaffordable project pushes the ai companies a little closer to bankruptcy, which is better than nothing, I guess.

$1000 a week?? Even putting aside literally all of the other issues of AI, it is quite damning that AI cannot even beat humans on cost. AI somehow manages to screw up the one undeniable advantage of software. How do these people delude themselves into thinking that the dogshit they’re eating is good?

As a sidenote, I think after the bubble collapses, the people who predict that there will still be some uses for genAI are mostly wrong. In large part, this is because they do not realize just how ruinously expensive it is to run these models, let alone scrape data and train them. Right now, these costs are being subsidized by venture capitalists putting their money into a furnace.

How do these people delude themselves into thinking that the dogshit they’re eating is good?

They think it’s just that they’re early, like they did with bitcoin. Maybe in six monthsthe dogshit will start to taste great, who’s to say, and so on and so forth.

Also swengs in the USA often make absurdly more than 1K/week.

I guess I can check back in six months to see how they’re doing … wait a minute, they were saying the same things six months ago, weren’t they? That’s a bummer.

Well, sure, but that was six months ago.

Tired: it’s required to taste

Wired: it’s an acquired taste

they’re already pivoting to the narrative that “local models will be plenty good enough and it will be trivially affordable to run them”

and it will be trivially affordable to run them

ON WHAT HARDWARE BEN, DDR2 SCAVENGED FROM JUNKYARDS???

i still think that lots of people damaged by chatbots will stop in their tracks when this vc money burning charade ends, they won’t be able to set up it all locally because chatbots brainrotted them even if it was possible in the first place

thankfully temporarily free

god I can’t wait for the subsidies to end

Bit early to celebrate, but every bit of grit in the wheels of the llm machine is welcome: Microsoft is walking back Windows 11’s AI overload — scaling down Copilot and rethinking Recall in a major shift

- recall might be rethought, again

- copilot integration in the most stupid places (notepad, paint, maybe others) “under review”

- no new copilot integration with other tools that ship with windows

Still plenty of other ai projects going full steam ahead, but promotion in plenty of tech companies and especially microsoft comes with being associated with a product launch, and if you’re smart what happens after the launch is someone else’s problem. I wouldn’t be surprised to see plenty of this stiff clinging on until it reaches consumers, and then being immediately “scaled back”.

R3call

Buisness plan: daily reminders to Recall the Recall Recall. It’s memento mori for CEOs as a service.

Does (deservedly) mercilessly bullying Slopya Nadella actually work?

Today in excellent cold opens: “I didn’t talk to ChatGPT, I never have. Instead, I took a load of edibles and laid down in the driveway with the hose on. I produced nothing of value and wasted a ton of water, but at least I ate three protein bars so I’m so healthy.”

Enjoyed this piece from Mission Local on San Francisco’s “March for Billionaires” yesterday.

Choice excerpts:

Despite the San Francisco locale, a participant said the event had “grassroots” origins at a “little rationalist restaurant get together” in a “group house” on Shattuck Avenue, subverting any assumptions that Berkeley is all radical hippies.

Mission Local contributor Benjamin Wachs coined a term for an event in which media observers outnumber participants: a panopticonference. This was close to that. Those in attendance did their best to field questions from the barrage of journalists that backed them into a tree.

This is where Annie, a young transgender woman who attended the protest in a T-shirt that said “I’m in a polycule with Aella,” first met Kauffman. An impromptu debate ensued, with Annie “aggressively defending billionaires.” It was, participants concluded, worthy of a larger forum.

“People are just jealous that they are poorer and weaker and uglier,” she said. “We are beautiful. We’re smart. We’re strong… We are supporting the billionaires, here.”

A polycule with Aella, otherwise known as a nightmare fuck rotation

Scum.

edit: reasonably certain annie is annieposting from tpot.

annieposting

I assumed billionaires could afford better signs. Were all her EAs on leave that week?

“People are just jealous that they are poorer and weaker and uglier,”

Remember when Rationalists pretended to care about truth, steelmanning, ideological turning tests etc.

(Also implying that billionaires are strong and attractive is funny)

subverting any assumptions that Berkeley is all radical hippies

Yall still are radical hippies. Some hippies just love the boot.

California is, I believe, the only state to give health insurance to people who come into the country illegally,” Kauffman said nervously. “I think we probably should not be providing that.

Rationalism, the empathy removal training center.

“It is the intention of journalists to lie, which is why we need to not do anything to the journalists themselves, but we need to simply remove them as a class,” Annie said. “Just like Germany does to the extremist organizations.”

Well, Germany certainly did excel at removing classes of people from society

lol.

Her political awakening, she added, was watching the press “constantly pump out obviously fake information” against Trump during the 2016 election instead of reporting on the “actual abhorrent views he holds.”

Converted by Scott. (That ‘people are saying I was wrong but actually I was right’ disclaimer aged worse than the post).

gag. going full mask off now

Did any actual billionaires show up to press their case, or was it all just cronies?

from bsky photos looks like entire gathering was 30 people. t h i r t y p e o p l e i might have counted some reporter or someone passing by randomly by accident

But why are we talking about some AI agent platform in the Urbit newsletter? Naturally because we think, Urbit fixes this.

As a matter of fact, Tlon is already working on this with their Openclaw Plugin for Tlon Messenger. It is currently in an early adopter phase, but they expect to provide an instance of Openclaw with every ship that they host for their users.

but of course

desperately trying to latch themselves onto the coattails of whatever passes for cool among nerds these days

So Krauss tried to introduce Joe Rogan to Epstein

But Rogan may have been unwilling to do so

How is it Joe Rogan is (possibly) the smartest person in this situation?

Similarly, what’s going on with Charles Murray (Bell Curve) ???

He converted to christianity? https://www.amazon.com/Taking-Religion-Seriously-Charles-Murray/dp/1641774851

But now supports euthanisia? https://www.compactmag.com/article/how-i-changed-my-mind-on-assisted-suicide/

Over in the epstein files, Jim Watson tried to make an intro but Murray never replied? https://www.justice.gov/epstein/files/DataSet 9/EFTA00475960.pdf

Apparently he’s a Quaker, so maybe that’s how the euthanasia stance can pass muster. But Quakerism might also make even less sense with his views on race? I don’t know enough about the reality of Quakerism to say.

Also, looks like Harris also deliberately side-stepped the dinner bait but I don’t know how much of that was because of Chomsky’s presence. Epstein tried again a year later without the Chomsky attendee name-drop, but Harris might have just not replied.

At least there are no surprises with Dawkins, even his sleazy friend Brockman seemingly finds him tiring

Glib jibes aside, I haven’t been able to bring myself to look at many of the docs that aren’t just quasi-celeb emails, the few I did see were far too much for me. I’m horrified at nearly everyone from all ideological stances on a number of different levels I never considered. I can only hope the remaining victims someday are able to find some peace, and some kind of huge systemic reform can come from this. What a vile world we live in.

being a member of the old boy club beats ideological differences.

Apparently he’s a Quaker, so maybe that’s how the euthanasia stance can pass muster. But Quakerism might also make even less sense with his views on race? I don’t know enough about the reality of Quakerism to say.

Quakers have a history of being anti-racist, but views on stuff like abortion and euthansia cam vary a lot. Quakerism is big on both individual conscience but also social justice and activism. It’s an interesting denomination.